Einstein Didn't Remember the Speed of Light

The Cognitive Offloading Revolution & Al Assistance.

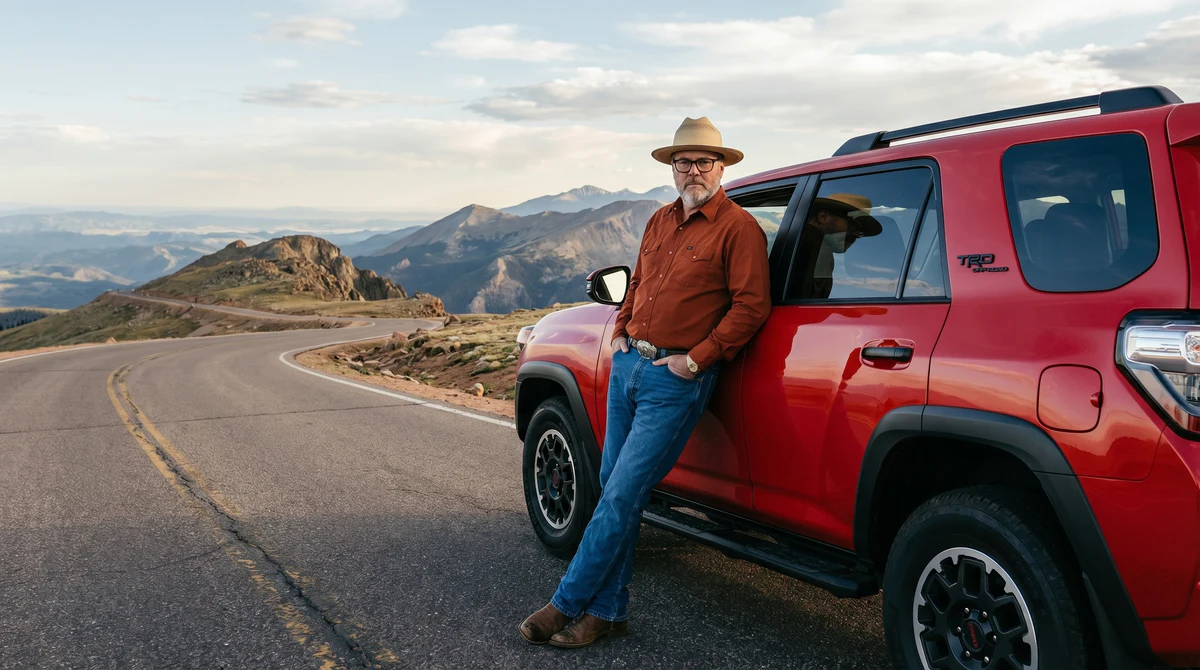

Einstein famously said he didn't bother remembering things he could look up. Thirty years into this career, I think I finally understand what he meant.

Not in a flattering comparison to Einstein kind of way. In a practical, this-is-how-I-work-now kind of way.

The memory tax used to be real

For most of my career, professional competence had a memory tax attached to it. You were expected to carry CLI flags in your head. Webpack config patterns. That one obscure TypeScript generic you use twice a year. The exact syntax for Next.js route handlers.

Knowing things was part of the job. Forgetting them was a liability.

Google changed that a little. You could offload the long tail of obscure knowledge and just look it up. But you still had to know what to search for, evaluate the results, translate the StackOverflow answer into something that actually fit your situation, and then implement it.

The cognitive load was reduced. Not eliminated.

AI changed the equation

What AI does differently is not just faster lookup. It's contextual recall with execution attached.

I don't remember the revalidatePath signature in Next.js. I don't need to. I describe what I'm trying to accomplish and I get working code back in seconds. I don't remember every git flag for a rebase scenario. I describe the situation and get the exact command.

The difference between Google and AI for this kind of thing is the difference between a library and a colleague who already read the library and can just tell you what you need.

There's a term for this: cognitive offloading. Humans have always done it. Writing things down, building tools, externalizing memory to systems. AI is just the most aggressive version of it yet.

The moat shifted

Here's what's interesting about this moment.

The bar for execution dropped. Almost anyone can write working code now with enough prompting. Deploy a Next.js app. Build a Postgres schema. Wire up an API.

But the bar for judgment went up.

Because now the question isn't "can you write it." The question is "do you know what good looks like." Do you know when the AI output is wrong. Do you know when the architecture it suggested will collapse at scale. Do you know what to build in the first place.

Thirty years of shipping large-scale commerce systems. That's not in the prompt. That's what I bring to the prompt.

The people winning right now are not the fastest prompters. They're the people with enough accumulated judgment to know what to do with the output.

Where BlackOps fits in

I've heard the critique. AI apps are just wrappers. There's some truth to it. A lot of what gets built on top of AI models is thin — a UI layer, a few prompt templates, a payment page.

But wrappers with the right wrapping on them are a different thing entirely.

BlackOps is not a generic content generation tool. It's structured AI infrastructure that does specific work, in a specific way, tuned to a specific kind of output. The brains, the voice system, the publishing workflow — all of it is opinionated. Built from what I know actually works, not from what sounds good in a pitch deck.

The AI inside it is a commodity. The structure around it is not.

That's the real question to ask about any AI product: what does the wrapper know that the model doesn't? What judgment is baked in? What decisions got made so the user doesn't have to make them every time?

If the answer is nothing, it's a thin wrapper. If the answer is thirty years of hard-won operational knowledge encoded into a system, that's a different product.

The practical upshot

I work faster now than I did five years ago. Not a little faster. Dramatically faster.

The cognitive load that used to go into remembering things now goes into deciding things. Evaluating. Directing. Knowing what to ask for and whether the answer is right.

Einstein didn't remember the speed of light because he trusted he could derive it when he needed it. I don't remember the exact git rebase flags because I trust I can get them in ten seconds.

Same move. Different century. Different tools.

The goal was never to have a good memory. The goal was to do good work. Those two things were linked for a long time. They're not anymore.

<bo-component slug="blackops-post-cta"></bo-component>

I wrote this post inside BlackOps, my content operating system for thinking, drafting, and refining ideas — with AI assistance.

If you want the behind-the-scenes updates and weekly insights, subscribe to the newsletter.