Why Your Best Work Gets 200 Views While Slop Gets 4.8 Million

The platform math that rewards repackaging over judgment, and why practitioners win long-term anyway

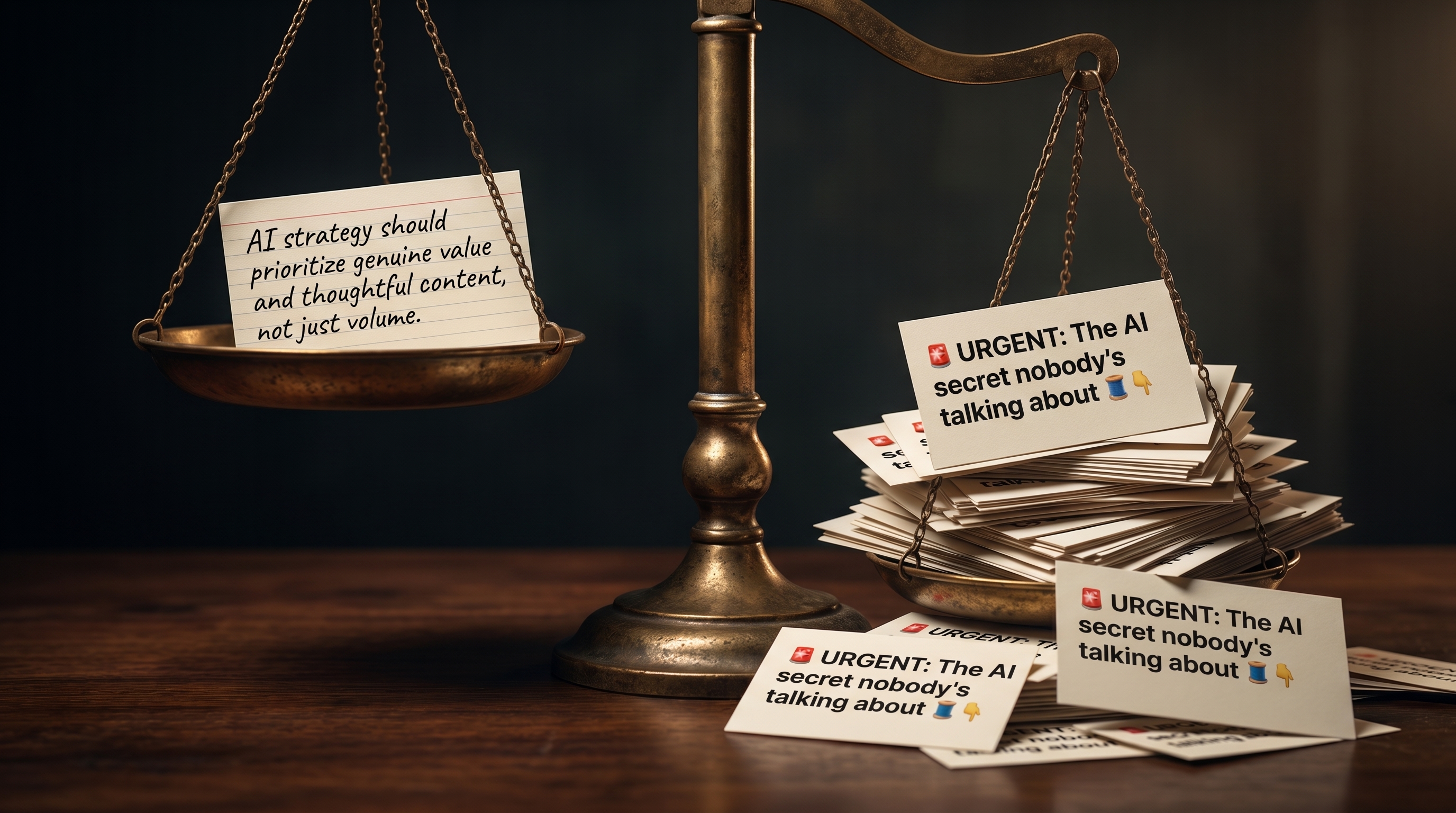

Your best work gets 200 views. A repackaged screen recording of someone else's talk gets 4.8 million. That's not an accident. It's the math of the platform, and right now it's actively punishing anyone trying to publish actual judgment.

Here's the thread I'm talking about. 22,000 likes. 3,500 reposts. 4.8 million views. It opens with "30 minutes. free. the person who created Claude Code." Then "watch the workshop. bookmark it." Then "worth more than every $500 course you almost bought." Then a video clip of someone presenting at an Anthropic event last May.

The thread is winning. And the interesting fact isn't that this kind of content exists, or that it's hollow. Both are obvious. The interesting fact is that it works at a scale that punishes anyone trying to publish real judgment, because they're competing for attention with people who've optimized every component of a post for the dopamine hit and removed every component that costs them something to write.

I want to walk through why this is happening, what it does to the people consuming it, and the bet I'm making by going the other way.

The pattern

Earlier this week I saw a different version of the same thread. A repackage of a 30-minute talk by Ivan Nardini, a Google DevRel engineer, given at Anthropic's "Code w/ Claude" event in May 2025. Ivan is the real deal. He co-teaches the official Agent2Agent protocol short course with engineers from Google and IBM. The talk itself covers Google's agent stack: ADK, MCP, Vertex AI Agent Engine, A2A.

The repackage was 7 sections, each ending with a follow CTA, opening with "Cancel your weekend plans," and recommending Claude 3.7 Sonnet. Claude 3.7 Sonnet was retired on the Anthropic API on February 19, 2026. On Vertex AI, where the talk was actually demoed, it gets shut down May 11. Following that recommendation today gets you an error.

The thread also called A2A "the future nobody's talking about" and "the infrastructure layer of the coming AI economy." A2A was donated to the Linux Foundation in June 2025, hit v1.0 this Tuesday at Google Cloud Next 2026, and is in production at 150 enterprises. Three days before this thread told its readers it was a secret, Google announced it on the keynote stage of their flagship conference.

Same pattern. Different writer. Different talk. Identical structural moves.

There's a real talk by a real practitioner. There's a repackager who watched it at 2x speed. There's a hook engineered to trigger urgency. There's an authority claim that overstates the source ("Anthropic's team" / "the person who created Claude Code"). There are factual errors that anyone shipping with the stack would have caught immediately. There are CTAs every 200 words. And there is engagement that dwarfs anything an actual practitioner produces.

Why it works

I want to be careful here, because the easy move is to call this slop and move on. The harder move is to look at why the platform rewards it and what that's doing to the readers.

Three things make it work.

First, the urgency loop. "Cancel your weekend plans." "$500 course you almost bought." "You've been using Claude without knowing 40 of its features." Every one of these is a loss-aversion pull. The thread isn't selling information. It's selling the feeling that not engaging is costing you money. That feeling is more compulsive than curiosity, and the engagement numbers prove it.

Second, the borrowed authority. "The person who created Claude Code." "Anthropic's team just dropped." Both claims attach the writer to a real practitioner's credibility without doing the work to earn any of their own. The reader's brain doesn't audit the citation. It registers "Anthropic" and "30 minutes" and feels like it's getting insider access. The repackager pays nothing for this. The practitioner pays in lost attribution.

Third, the appearance of structure. The 7-part breakdown. The numbered list. The diagrams that look like systems but aren't, with labels like "AI PROMPT LOGIC CORE" and "PROMPT VELOCITY & QUALITY." Those aren't concepts. They're words arranged to look like concepts. The visual does the same job as the copy: signals depth that isn't there, fast enough that the reader scrolls past before noticing it doesn't resolve.

The platform rewards all three. The audience, mostly, doesn't notice. The math works. 4.8 million views.

What it does to the reader

Here's the part I actually care about.

If you're an operator who ships, your timeline is your input. If your input is repackaged, error-laden, urgency-tuned content, your model of what's happening in the industry is wrong. You'll reach for tools that are deprecated. You'll be surprised by things that have been public for a year. You'll absorb the implicit message that everyone is making twelve thousand dollars a month building agents while you're still trying to get your first one to deploy. None of that is real, and none of it helps you ship.

Worse, you'll start to feel slow. Not because you are slow, but because the content you're consuming is engineered to make stationary feel like falling behind. That feeling has a cost. It pulls you toward chasing the next thread instead of finishing the thing in front of you.

I've watched smart engineers I respect spiral on this. Not because they're undisciplined. Because the stream is calibrated to break discipline.

Why it doesn't compound

The repackager has a problem they don't talk about. They have to keep posting, forever, at the same intensity, or it ends.

Every thread starts from zero credibility. Nothing they wrote yesterday matters to the algorithm tomorrow. There's no body of work, no compounding moat, no readers who follow because of a developing perspective, because there is no perspective. There's a content schedule and a hook template. The moment they stop, the audience evaporates, because the audience was never theirs. It belonged to the algorithm.

The practitioner who ships and writes from what they shipped has the inverse problem, which is actually a feature. They post less. Each post is harder to write. Growth is slower. But every post adds to a body of work that demonstrates judgment, and judgment is the only thing that compounds in this game. Year three, the practitioner has a moat. Year three, the repackager is still chasing the algorithm with a fresh hook template.

The bet is on which game has a longer runway. I'm betting against repackaging.

The structural opportunity

Here's what I keep noticing, and it's the thing that made me start building.

Every "AI content tool" on the market right now is built for the repackager. Paste a video, get a thread. Paste a blog, get 12 LinkedIn posts. Schedule, repeat, scale. The entire stack assumes the user has nothing original to say and needs help manufacturing the appearance of authority. That's a real market. It's also a saturated one.

The opposite user has been ignored. The operator who has shipped, made decisions, watched things break, has a body of work, has a perspective, doesn't need help manufacturing authority because they have it. What they need is the inverse stack: a system that turns their actual lived practice into distribution without diluting what makes their voice theirs.

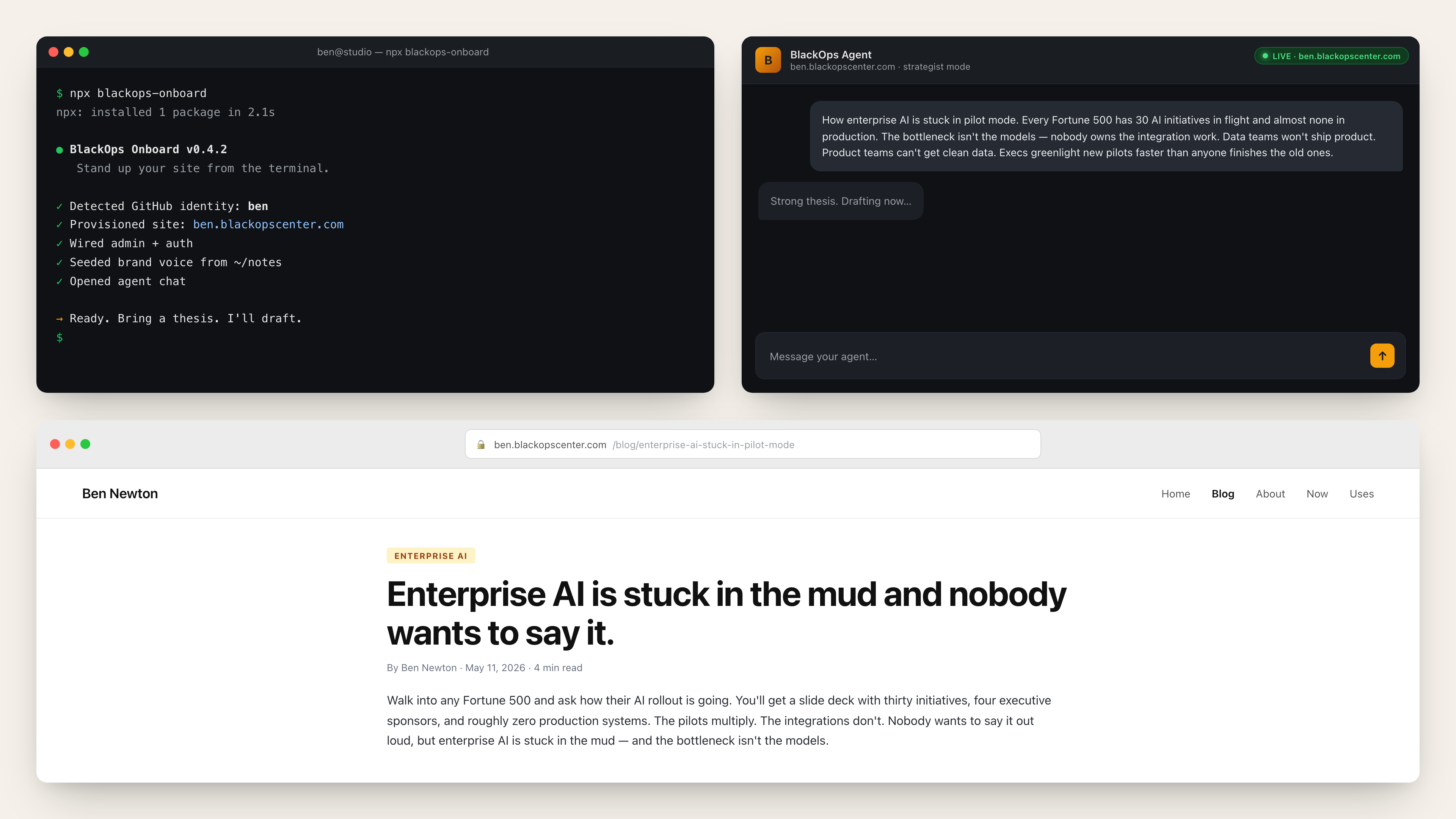

That's what I've been building. It's called BlackOps Center, it's at blackopscenter.com, and it's the publishing infrastructure I wanted to exist for people who already have something to say. Knowledge synced from where the work actually happens. Brand voice that enforces not sounding like everyone else. Output channels that respect the substrate instead of grinding it into 7 numbered sections with a follow CTA between each one.

I'm not pitching it here. I'm naming it because the post would be dishonest without it. The reason I notice the pattern in such forensic detail is that I'm building the alternative, and the contrast is the daily fuel for the work.

The bet

The slop will keep winning short-term. The 4.8 million views will keep coming. New repackager accounts will spin up every week to chase the same template. None of that is going to stop.

The bet is that the audience is starting to notice. That readers who got burned by stale model recommendations and "secret" protocols that aren't secret will start filtering harder. That the practitioners who kept posting their judgment, slowly, through the noise, will be the ones with readers in 2028.

The market for paraphrase is saturated. The market for judgment is wide open.

Pick the harder game. Even if it grows slower. Especially if it grows slower.

<bo-component slug="blackops-post-cta"></bo-component>

I wrote this post inside BlackOps, my content operating system for thinking, drafting, and refining ideas — with AI assistance.

If you want the behind-the-scenes updates and weekly insights, subscribe to the newsletter.