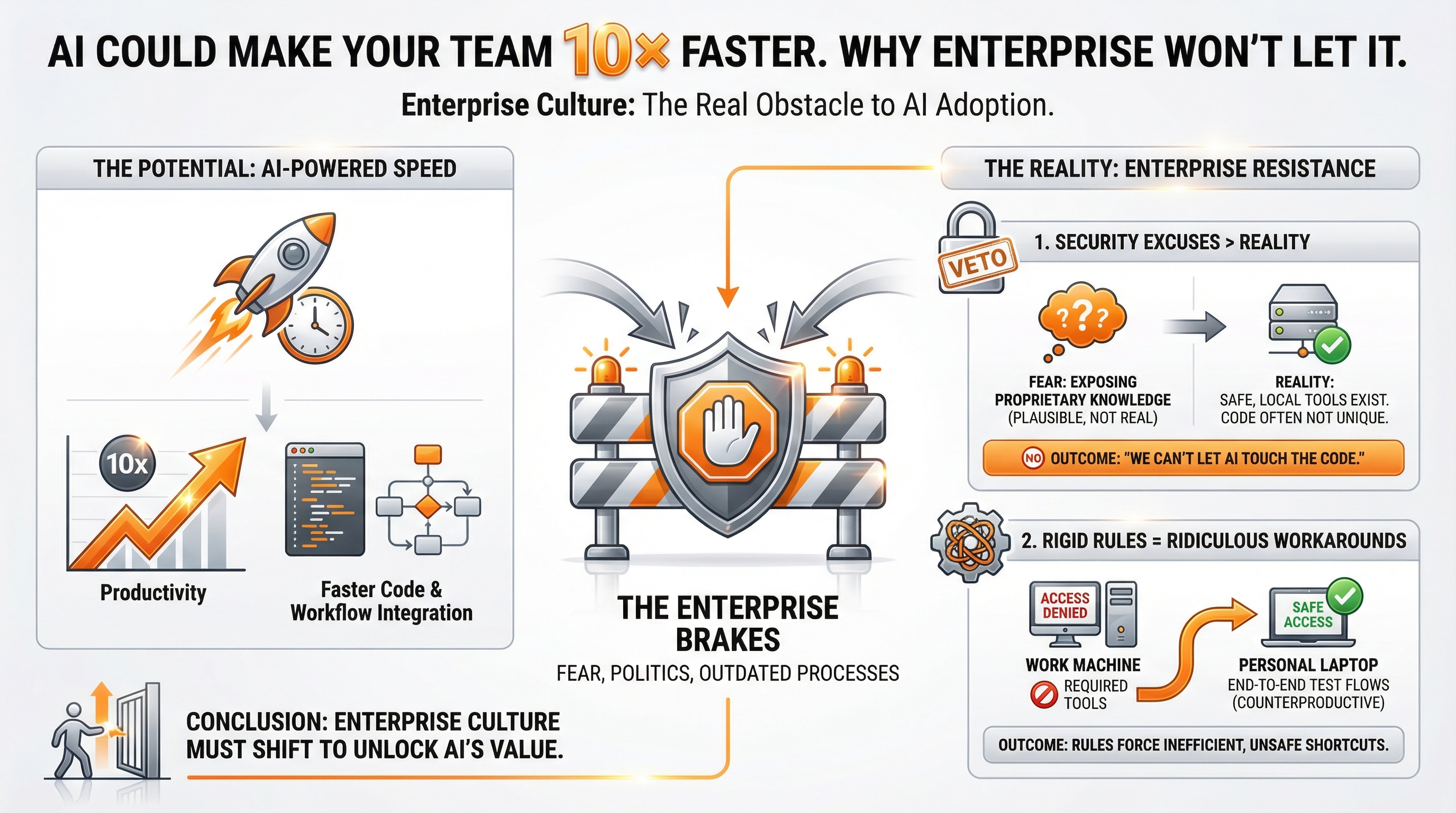

AI Could Make Your Team 10× Faster. Here’s Why Enterprise Won’t Let It.

AI isn’t the problem. Fear, politics, and outdated processes are.

Enterprises love to talk about AI as if that alone proves they’re modern. They host workshops, create “innovation committees,” and put generative models in slide decks as if that alone means they’re embracing the future. But when it comes time to actually use AI in the real work - inside the code, inside the workflow - the brakes slam on instantly. And it’s not because AI is risky or unproven. It’s because fear, politics, and outdated processes are baked into every layer of the organization.

AI could make teams dramatically faster. Enterprise culture is what stops it.

1. Security Excuses Carry More Weight Than Reality

Security has the strongest veto power. When you’re responsible for every possible risk, “no” becomes the default answer. Their biggest fear isn’t developers misusing AI; it’s the belief that if an AI tool reads internal code, the outside world suddenly gains access to some critical proprietary knowledge.

The irony is that most enterprise codebases aren’t hiding anything groundbreaking or uniquely valuable. There’s no secret algorithm or revolutionary logic buried in the repo. And modern AI tools aren’t uploading code to public models or training on it. Developers already have full access to the codebase and everything inside it.

But the fear sounds plausible, and in enterprise environments, plausible fear beats practical benefit every single time. So instead of allowing safe, local AI tools that would save hours or even weeks, teams are told: “We can’t let AI touch the code.” Enterprise security often protects the perception of risk, not the reality of it.

2. Rigid Rules Create Ridiculous Workarounds

This is where things often become counterproductive. I’ve had to build end-to-end test flows on my personal laptop - not because it was ideal, but because my work machine didn’t have access to the required tools. On my own device, I had enough access to get the work done safely without violating any rules.

These aren’t rebellious shortcuts. They are the natural consequence of a workflow that blocks progress instead of enabling it. When people can’t use the right tools, they find alternative paths that are often safer, simpler, and more productive than the official ones.

That’s the cost of blocking AI experimentation: it doesn’t prevent workarounds, it guarantees them.

3. The Resistance Isn’t Technical. It’s Human.

People assume project managers resist AI, but PMs generally just want the work delivered. The friction comes from groups who feel threatened by unclear boundaries or automation:

-

Security, because ambiguity equals denial.

-

QA, because AI can generate full test suites as fast as it generates code.

-

Legal, because their job is to eliminate hypothetical liability.

-

Other oversight roles, each with their own interpretation of “risk.”

Add a few layers of management on top, and requirements turn into a slow-motion game of telephone. By the time a requirement like “Add validation to the form” passes through the chain, five different groups have five different ideas of what that actually means.

No one wants to say: “There’s a faster way to achieve the same result.”

And the moment AI enters the conversation, the instinct becomes: slow it down.

4. AI Would Already Be Saving Millions If It Were Allowed

Here’s a simple truth: just being able to ask an AI assistant, “Where is this business rule implemented?” or “Show me the flow of this logic,” would save days of reading and backtracking inside massive codebases.

But because AI tools can’t “touch the code,” those hours become days, and those days become entire sprints.

Contrast that with my work using AI freely outside of enterprise restrictions. I’ve built full E2E flows, automated repetitive tasks, and shipped more features in a weekend than entire teams can ship in a sprint. The difference wasn’t talent or tooling - it was freedom to use the tooling.

5. Enterprises Aren’t Slow Because AI Is Hard

The real reasons are cultural and structural:

-

The entire system is optimized for risk avoidance, not speed.

-

Too many people interpret requirements differently.

-

Processes built for a pre-AI world haven’t been updated.

-

Experimentation is treated as danger instead of opportunity.

-

No one wants to be the person who approved something new.

But the biggest reason is this: many organizations don’t fully trust their senior engineers to use good judgment with AI. And that’s ironic, because senior engineers are the ones who can use AI the most safely and effectively to deliver 10× the output.

6. The First Step Is Simple: Allow Experimentation

If I were in charge of enterprise AI adoption, the very first change I’d make is giving teams freedom to experiment. Let them use local AI tools in isolated sandboxes without four months of approvals. Let them try things. Let them see what’s possible.

A team that experiments learns.

A team that learns adapts.

A team that adapts wins.

A team that’s blocked stays blocked.

7. AI Is Not the Enemy

AI isn’t a threat waiting to replace good developers. It’s a multiplier.

It makes strong engineers significantly more effective.

It eliminates grunt work.

It scaffolds, validates, improves, and accelerates.

This isn’t theory. I’ve experienced the before-and-after. Teams that embrace AI will dramatically outperform those that don’t, and the gap won’t close.

8. The Companies That Win Will Be the Ones That Stop Delaying

Not the ones with the biggest budget.

Not the ones with the most committees.

Not the ones still running “exploration meetings” in 2025.

The winners will be the companies that trust their best engineers, remove unnecessary blockers, and allow AI to become a natural part of daily work.

AI isn’t the risk.

Standing still is.

Teams that empower engineers with AI will run circles around the ones still debating it.

I wrote this post inside BlackOps, my content operating system for thinking, drafting, and refining ideas — with AI assistance.

If you want the behind-the-scenes updates and weekly insights, subscribe to the newsletter.